Engineering leader specializing in threat detection, security engineering, and building enterprise B2B systems at scale. Deep hands-on roots in software architecture and AI tooling - currently exploring the frontier of AI agents as co-founder of AI Agent Lens.

12

Posts

4

Slides

2

Guides

8

Contributions

2

Comments

Posts

12

Featured

The Knowledge Spine: Why Organized Context Beats Raw Intelligence for AI Code Quality

Featured

Claude Mythos Just Changed Cybersecurity

The Complete Engineer's Guide to AI Agents

The SaaS Moat is Draining

Securing AI Agents: From Code Scanning to Runtime Enforcement

Featured

Tests Are the New Source Code

When "SSL Handshake Failed (525)" Isn't Actually SSL

Featured

My Server Got Cryptojacked Through a Next.js Vulnerability

Autoscaling Revisited: LLMs, MCP, and the Stack

Featured

The Open-Source Autoscaling Stack in 2024

Autoscaling From the Inside: Seven Years at Turbonomic

Featured

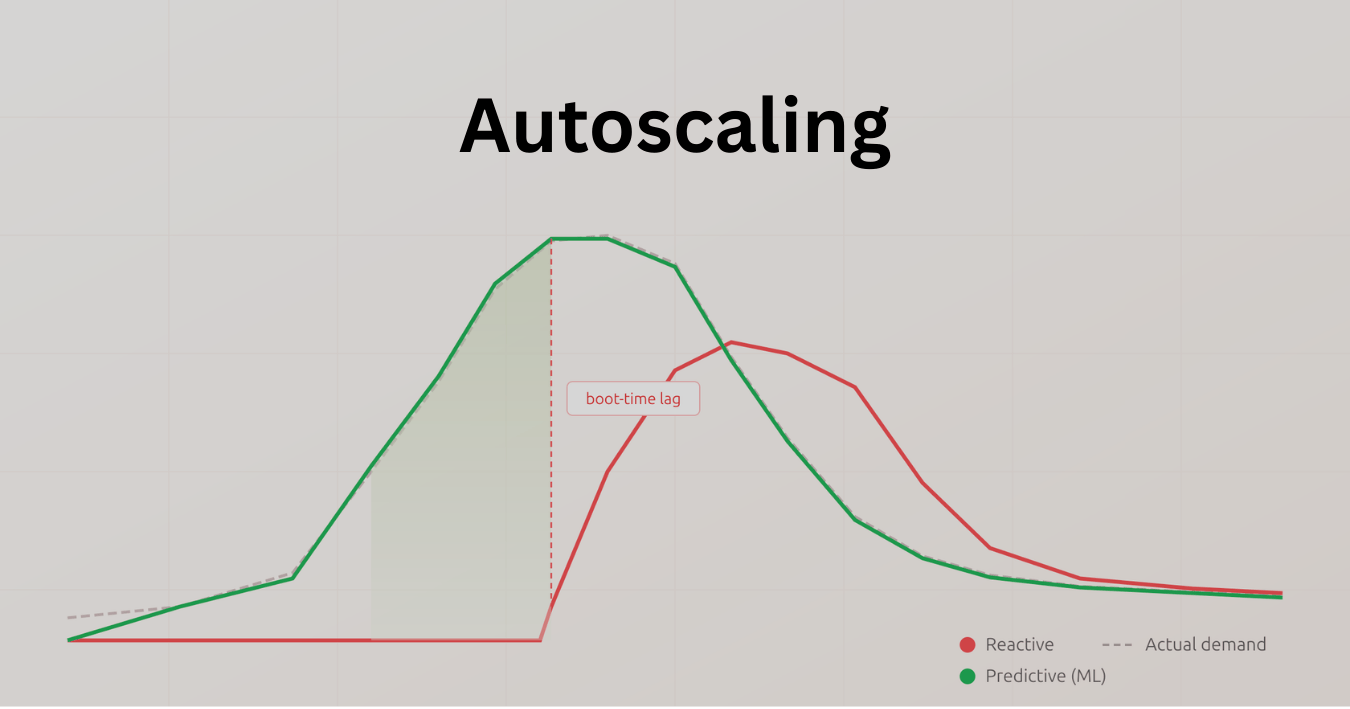

Why Reactive Autoscaling Isn't Enough — and How ML Changes That

Presentations

4Guides

2Contributions

8

Your Agent Passed the Eval. That's the Bug

The MCP Credential Theft Surface You Didn't Know You Had

Why We're Open-Sourcing AgentShield

The 6 Layers Between Your AI Agent and `rm -rf /`

The Noise Is the Problem

MCP Is Everywhere. So Are Its Attack Surfaces.

From Vibe-Coded App to SOC 2 Audit in 60 Seconds

Your MCP Server Can Read Your iMessages